Spec-driven development is older than AI, but agent workflows make the boundary between design artifact and executable logic newly important.

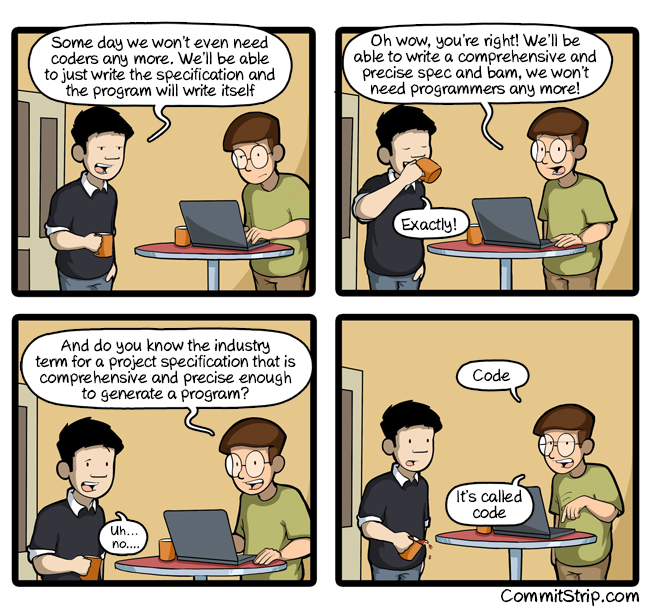

(Source: “A very comprehensive and precise spec” from CommitStrip)

(Source: “A very comprehensive and precise spec” from CommitStrip)

An Older Engineering Habit Under New Pressure

Picture a platform team reviewing an internal agent workflow for customer support.

They are debating a scheduler that fans out work across multiple agents, retries failed steps, persists thread state, and hands off certain actions to a ticketing extension. One engineer wants to write the logic directly. Another wants a design document first. A third wants an RFC with interface contracts and failure semantics before anyone touches the service loop.

Long before coding agents, teams already wrote protocol specs, design docs, implementation plans, interface contracts, and technical notes to force clarity before code hardened the wrong assumptions. The names were inconsistent and often used loosely, but the underlying habit was familiar: some artifacts were meant to preserve intent, some to preserve behavior, and some to preserve the exact mechanism.

What AI changes is not the existence of that habit, but the cost structure around it.

Models forget, sessions reset, and tasks get delegated across agents and across days. A design decision that once lived in a senior engineer’s head or in a meeting can now become a persistent control surface for a toolchain. That shift makes specification artifacts more valuable, but it also makes sloppy ones more dangerous. If the document is vague, the model fills in the gap. If the document is over-precise, it starts turning into pseudocode. If the document mixes intent, contract, and mechanism carelessly, humans and agents both lose the plot.

Two recent cases make this tension concrete. The Symphony case, discussed in the essay A Sufficiently Detailed Spec Is Code, shows how an agent-facing spec can expand until it is very close to code. CodeSpeak explores the opposite possibility: perhaps a smaller maintained spec can govern a much larger implementation surface if the right workflow surrounds it.

Taken together, these cases do not answer whether specs replace code. They clarify a narrower and more useful question: what should a spec carry, what should code carry, and what changes when AI agents sit between them?

Older Documents Already Split the Work, Even When They Did Not Say So

Arguments about spec-first development often become confused because the word specification is doing too much work.

In older engineering practice, teams used terms such as spec, design, plan, technical note, RFC, and reference implementation with a good deal of looseness. Those labels were not identical, but they were often pointing at three recurring functions:

| Artifact | Typical older names | What it preserves | What agents gain from it | What it tends to lose |

|---|---|---|---|---|

| Intent spec | design doc, product spec, technical note | goals, constraints, operating context, non-goals | durable task framing across sessions | local execution detail |

| Behavioral contract | RFC, API spec, schema, test plan | examples, invariants, interface rules, failure semantics | bounded generation and review | full mechanism and edge-case ordering |

| Code | implementation, reference implementation | exact control flow, state transitions, runtime interaction | the narrowest executable interface | high-level rationale |

Older teams already worked across these layers. What is different now is that agent toolchains put pressure on them to become more explicit. A human can often recover an omitted assumption from context, history, or conversation. A model may replace that omission with a plausible default that is locally fluent and globally wrong.

This is why spec-driven development remains a real engineering practice rather than a historical curiosity. A good spec can still be shorter than code when it preserves stable intent or a compact contract over familiar patterns. A spec also converges toward code when it must pin down state machines, retries, persistence behavior, access control, or extension ownership with very little room for interpretation.

That convergence is not a scandal. It is a signal that the artifact has moved from broad guidance toward a narrow interface.

Symphony Shows the Precision Tax

The Symphony case is useful because it shows that movement in the open.

In the reference notes, Symphony is described as an agent orchestrator generated from a SPEC.md file. But that file is also described as containing schema dumps, concurrency formulas, retry backoff equations, cheat-sheet summaries for the model, and “reference algorithms” that behave like functions written in Markdown.

An illustrative fragment looks like this:

|

|

That is not yet executable code, but it is already carrying implementation-level burden. A code version is only one step away:

|

|

This happens for understandable reasons. Orchestration systems are dense with ambiguity-sensitive behavior. Small differences in slot accounting, retry ownership, timeout handling, cancellation order, or extension validation can change whether the service is merely untidy or operationally wrong. Once a document must answer those questions precisely enough that multiple agents or humans will implement them the same way, the prose starts paying many of the same costs as code.

That is the diagnosis, but the more interesting part is what follows from it.

The Symphony lesson is not that specs are useless or dishonest. The better lesson is that agent-facing specs need stronger discipline than ordinary project prose. If they fail to separate system purpose from local mechanism, they become bloated and still remain unreliable. If they avoid precision where the runtime demands it, they delegate the hard part back to the model and hope the gap closes itself.

The reliability issues described in the notes reinforce this point. A spec can be detailed enough to look serious and still fail to produce a dependable implementation. The Haskell generation attempts reportedly needed repairs, and even a version that appeared to run could stall on a simple operational task. The YAML comparison in the notes pushes the lesson further: even mature, widely used specifications with conformance tests leave room for drift. The problem is not just “write more detail.” The problem is deciding which detail belongs in which artifact and which checks will catch disagreement.

What Symphony Suggests About Better Specs

Viewed constructively, the Symphony case suggests a better standard for agent-facing specification work.

First, the document needs a clear global model before it accumulates local rules. A list of validation clauses or formulas is not enough if the reader cannot say what the scheduler is trying to optimize, which component owns retries, or which state transitions are meant to be impossible.

Second, invariants need to be named explicitly. “Do not exceed max_concurrent_agents” or “a retry must not duplicate side effects after an extension reports success” are more useful than prose that merely implies those constraints.

Third, interfaces should be grounded in typed schemas and concrete examples wherever possible. If an extension contract matters, the document should show the expected request and response shape, the ownership of validation, and the failure behavior. That gives both humans and agents something firmer than descriptive prose.

Fourth, pseudocode should be local rather than ambient. Some parts of a system genuinely benefit from a reference algorithm. A service loop, a retry scheduler, or a conflict-resolution rule may need one. But once the entire document starts drifting into code-shaped prose, the team loses the advantage of having distinct artifacts at all.

Finally, the document should separate intent, contract, and mechanism instead of layering them into one undifferentiated Markdown file. A model can survive complexity better when the artifact itself makes clear which statements are goals, which are interface rules, and which are implementation sketches.

For a human team, these choices improve readability. For an agent toolchain, they also improve control. A better spec is not simply a longer spec. It is one where the model knows which parts are negotiable, which are binding, and where it is expected to infer versus obey.

CodeSpeak Is Best Read as a Workflow Pattern

CodeSpeak matters for a different reason. It is less interesting as a slogan and more interesting as a workflow hypothesis.

The obvious slogan is “maintain specs, not code.” Read carelessly, that sounds like a universal replacement claim. Read more carefully, it sounds like a claim that a team can maintain a smaller, longer-lived control surface over generated code if the mapping from intent to implementation is repetitive enough and the verification loop is strong enough.

That reading is more persuasive.

The case studies in the notes fit a particular profile: bounded modules, familiar implementation patterns, and behavior that can be checked by tests or examples. Subtitle parsing, small generators, encoding detection, and file-format conversion are not trivial, but they live in a region where a concise maintained artifact may plausibly govern a larger generated implementation.

Seen that way, CodeSpeak is not only a product. It is a generalized development pattern with four recurring moves:

- Maintain a persistent spec artifact that survives across sessions.

- Regenerate code from spec diffs rather than rewriting the whole system from scratch.

- Allow handwritten and generated code to coexist instead of forcing an all-or-nothing migration.

- Summarize existing code back into spec-like artifacts when the team needs a durable handle for future changes.

Many current coding agents can approximate parts of this pattern even without CodeSpeak itself. A team can version a design artifact, preserve promptable contracts next to tests, regenerate a narrow module after a spec change, and use code-to-spec summaries to support refactors or language migrations.

That does not mean the pattern emerges automatically from an empty chat window. Contemporary coding agents do not magically reproduce CodeSpeak’s discipline or ergonomics just because the user asks for “spec-first development.” The workflow still needs structure: persistent artifacts, bounded ownership, executable checks, and humans who can tell when the regenerated code is becoming stranger than the maintained summary suggests.

This is also where the limits become clearer. The pattern weakens in brownfield systems with years of undocumented exceptions, platform coupling, and security-sensitive state transitions. It weakens when the core difficulty lives in the runtime mechanism rather than in the stated contract. It weakens when the spec is asked to absorb every tiny implementation tweak, including changes that are faster and safer to make in code.

So the best reading of CodeSpeak is narrower but stronger: it is a maintained-control-surface strategy for long-lived agent work, not proof that software engineering has escaped the need for code-level understanding.

Why Small Prompts Sometimes Work Anyway

Critics of spec-first development often sound stronger than the day-to-day experience of agent users because they are usually targeting the hardest cases.

A short prompt sometimes really does produce useful code. That is not an illusion. It works when the missing detail lives in widely shared priors: common CRUD flows, ordinary parsing logic, routine test scaffolding, or familiar UI and API patterns. In those cases the model is reconstructing something conventional from a compressed hint.

That is also why the same trick breaks down once the omitted detail becomes the product:

- a custom authorization lattice

- a scheduling rule that affects billing

- a retry policy that must preserve idempotency across partial failures

- a hospital escalation workflow where timing carries legal risk

Here the agent is no longer filling in harmless boilerplate. It is being asked to invent system-specific invariants. This is exactly where a better spec helps, and exactly where a vague spec becomes dangerous.

Layered Agent Engineering Works Better Than Slogans

The practical conclusion from Symphony and CodeSpeak is not that teams must choose either prose or code as the one true artifact. The better move is to assign different jobs to different layers.

| Layer | Best maintained as | Why it matters for agents |

|---|---|---|

| Intent and operating constraints | design doc, spec, technical note | survives delegation and session resets |

| Behavioral envelope | schemas, examples, tests, interface contracts | constrains generation to a checkable boundary |

| Exact mechanism | code | encodes ordering, mutation, failure handling, and runtime coupling precisely |

| Real-world behavior | logs, traces, incidents, telemetry | reveals drift that neither prose nor tests fully predicted |

Most failures appear when one layer is asked to do all four jobs.

If code is the only source of truth, teams lose a durable way to preserve intent across refactors, migrations, and repeated delegation. If prose is treated as the only source of truth, local mechanisms become too easy to misread or regenerate incorrectly. If tests are treated as the entire spec, they only protect what they encode, and some agent workflows may even be tempted to rewrite the tests rather than satisfy them honestly.

This layered view also clarifies what AI changes. Agents increase the value of persistent design artifacts because those artifacts can carry meaning across resets and handoffs. They do not remove the need for a machine-facing narrow interface once exact runtime behavior matters.

Carson Gross Is a Useful Counterweight

Carson Gross (who creates and develops htmx JS library) offers a sharp caution that belongs near the end of this discussion.

His line, “You have to write the code,” is more than a pedagogical complaint about students using AI for homework. It points to an engineering risk. If a programmer stops writing code entirely and only generates it, that programmer may also lose the habit of reading code well enough to judge accidental complexity, weak decomposition, or inappropriate shortcuts.

That warning matters here because spec-driven workflows can tempt teams into thinking the important work has moved fully upward into prompts, plans, or Markdown artifacts. It has not. The machine still runs code, and generated code can accumulate complexity that the higher-level artifact never intended.

Gross also pushes back on the analogy that prompting is simply the next step after the move from assembly to high-level languages. His objection is persuasive. Compilers are far more deterministic and structure-preserving than current LLM pipelines. A for loop or if statement in a high-level language maps to lower-level behavior through a well-defined translation process. Prompted code generation is looser. It can choose different decompositions, introduce extra abstractions, and smuggle in accidental complexity while still sounding plausible.

For agent practitioners, the implication is straightforward. Better specs help. Persistent design artifacts help. Diff-driven regeneration can help. None of those remove the need for people who can read the resulting code and decide whether the toolchain is still under control.

Spec-Driven Development Is Old. Its New Value Is Persistence.

The Symphony and CodeSpeak cases point in the same direction once the slogans fall away.

Spec-driven development did not begin with AI agents. Engineering teams have long relied on design artifacts to preserve intent before and alongside implementation. AI changes the value of those artifacts because agents forget, delegation is cheaper, and versioned documents can now act as durable control surfaces for repeated generation and repair.

Symphony shows the lower bound on precision. Once a document must capture scheduler math, retry ownership, persistence rules, and extension semantics with very little ambiguity, it starts carrying many of the same burdens as code. That does not mean the document has failed. It means the team must be careful about where the spec ends and where the implementation begins.

CodeSpeak shows a more optimistic upper bound. In bounded modules with strong priors and strong verification, a smaller maintained artifact can govern a much larger implementation surface. That pattern is real, but it is not automatic. It depends on workflow discipline and on humans who can tell when the generated mechanism has drifted away from the intended contract.

So the practical question is narrower than “spec or code.” A better question is which parts of a system deserve to live in which representation, which checks keep those representations aligned, and which people on the team still know how to read the code when the higher-level artifacts stop being enough.